Not all extreme weather can be attributed to climate-change. (And climate-scientists never said they could)

2021 was a summer (and fall) of media-attracting heat waves, wildfires, floods and hurricanes. The news coverage that accompanied many (not all) of these natural disasters pointed to climate-change as the culprit. It’s a playbook we’ve all become accustomed to: weather disaster strikes – trigger the climate-change headlines. And for good reason: there is scientific consensus that climate-change is contributing to more severe heat-waves, more intense rainfall, and higher sea levels.

But weather is – naturally – wildly variable. Deviations are, in fact, the norm. This is so blatantly obvious (to lay-people and scientists alike) that it can often go unstated. It’s a fact that complicates attempts to determine if a single weather event was “caused” by climate-change. In fact, natural *climate* variability (multi-month to decadal changes in the climate-system) is also the norm. For example, we’re currently in a “La Nina” winter, which is typified by warmer/drier conditions in the southern U.S. and cooler and wetter in the northern U.S. Fluctuations between La Nina and its opposite phase, El Nino, conditions long predate anthropogenically forced climate change.

Attribution Science?

In the world of “climate change attribution science,” grappling with this natural variability is critical. Most folks outside of climate and weather circles have never heard of attribution science – but they’ve probably read the headlines informed by it. It’s a type of research that is intended to quantify how the probability of a type of weather event has changed due to anthropogenic emissions of greenhouse gasses (e.g. CO2, methane).

For example, this summer, western Europe experienced levels of accumulated rainfall that resulted in catastrophic floods – killing over 200 people. Scientists estimated that there is 1 in 400 chance of a rainfall event of this severity (and in this location ) in a given year. A very rare event. An attribution analysis by the World Weather Attribution (WWA) initiative found that human-induced climate change made the event 1.2 to 9 times more likely than it would have been 100 years ago.

The WWA is an international collaboration of scientists who have been performing real-time analyses of extreme weather events since 2015. Their work is underpinned by the peer reviewed methods of a broader community – scientists have been engaging in this line of research since the early 2000’s.

In part, the attribution process consists of analyzing historical observations of similar events to see if there has been a detectable change in the last century. One of the biggest challenges in attribution science is determining if a signal of climate-change can be detected over natural weather and climate fluctuations.

Climate models (global and regional) are also used to simulate the statistics of the events in a counterfactual past — one in which emissions remained at pre-industrial levels. So being able to faithfully simulate the extreme phenomena in question (and its response to atmospheric concentration of greenhouse gasses) is critical. And the reality is that models can’t simulate all events with the same level of fidelity.

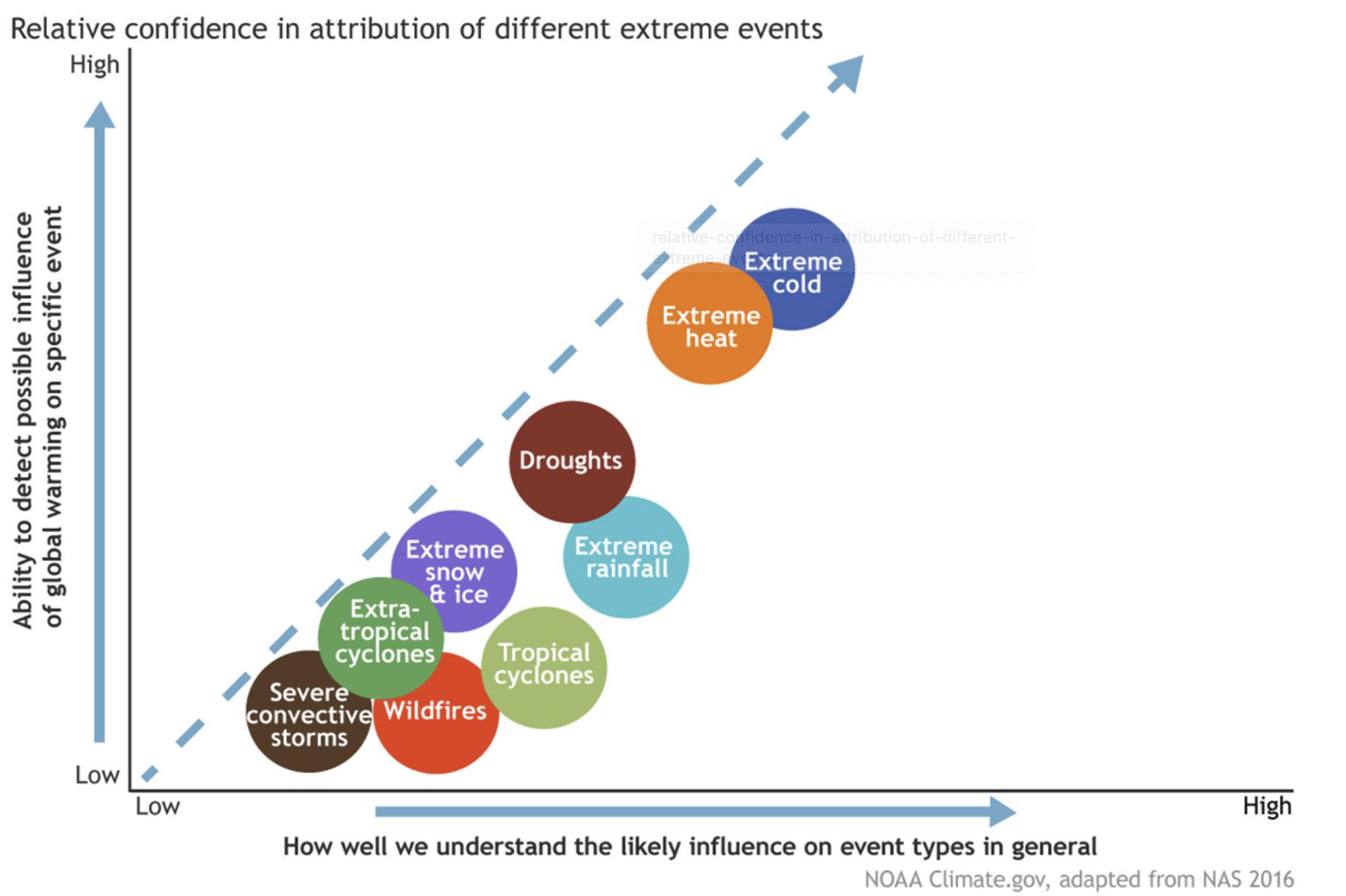

There is, in fact, a general hierarchy of how readily phenomena lend themselves to attribution: Heat and cold temperature extremes are most amenable to accurate attribution, convective storms (and their sub-perils like tornadoes and hail) are most difficult (see image).

Not all extreme events have a detectable climate-change signal

The WWA found that the exceptionally rare Pacific Northwest heatwave (the “heat dome”) that shocked the U.S. in June was made 150 times more likely by climate change.

But not all extreme events can be attributed to climate-change - the WWA reported that the ongoing, multi-year drought in Southern Madagascar (which has been linked to severe famine in the region) cannot be attributed to climate change (“trends remain overwhelmed by natural variability”). Another example? The recent, unseasonable cluster of tornadoes that devastated parts of Kentucky (and nearly states). For a number of reasons, tornadoes are difficult to attribute. One reason is the spatial scale – the vast majority of models used for attribution simply don’t operate at high enough resolution to simulate how the statistics of tornado clustering will change. A second reason is that the physical reasoning about the impact of climate-change on tornadoes is ambiguous – making it difficult to justify a strong hypothesis about what the future holds.

Why should anyone care?

There are a number of reasons why attribution science is an import part of climate-change fluency. It creates a framework through which evidence-based conversations about if and how our lives are being impacted by climate change can be had. Folks will be seeing more and more of this in the media. In 2020, the NOAA Climate Program Office announced that they had funded a half dozen research projects aimed at advancing the science and deployment of rapid attribution analysis.

This could be a game-changer for climate change litigation. There have been over a 1000 legal actions brought against major oil and gas producers. But a recent study out of Oxford University has found that many of the cases are not making full-use of the latest advances in attribution science.

As these cases become more successful, corporate emissions will increasingly be seen as investor liabilities.

Alicia Karspeck has a Ph.D. from Columbia University in climate modeling and has worked at the National Canter for Atmospheric Research for over 12 years, and a Co-Founder at SK Analytics. She is focusing on bringing climate-ESG and financial-factor insights to risk management practices for long-term investors.